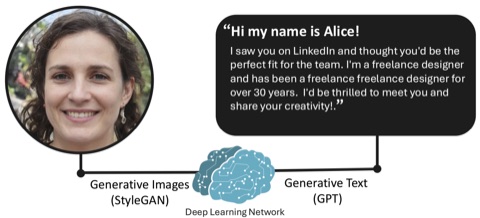

AI-Enabled Abuse

How people abuse AI systems, and how people perceive and respond to abusive AI-generated media.

AI Sexual Content • Bias in AI Media Moderation • Perceptions of AI Media

The Happy Lab studies how people shape the security, privacy, and real-world use of AI systems. We investigate where human factors create new vulnerabilities, where they unlock better defenses, and how to build safer AI systems in practice.

Recent News

May 25, 2026 Humans of Generative AI @ CVPR, a workshop on understanding how sociotechnical insights can inform technical AI design, is coming to Denver in just under two weeks. Hope to see you there!

May 19, 2026 Two papers were conditionally accepted to SOUPS 2026! Congrats to the authors!

April 15, 2026 Our paper with Drs. Lucy Qin and Elissa M. Redmiles from Georgetown University, "Unlimited Realm of Exploration and Experimentation": Methods and Motivations of AI-Generated Sexual Content Creators", was accepted to FAccT!

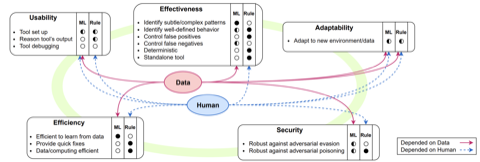

January 19, 2026 Work with the SEFCOM and TSP Lab, "I Can SE Clearly Now: Investigating the Effectiveness of GUI-based Symbolic Execution for Software Vulnerability Discovery", has been conditionally accepted to CHI 2026, congrats all!

Research Areas

How people abuse AI systems, and how people perceive and respond to abusive AI-generated media.

AI Sexual Content • Bias in AI Media Moderation • Perceptions of AI Media

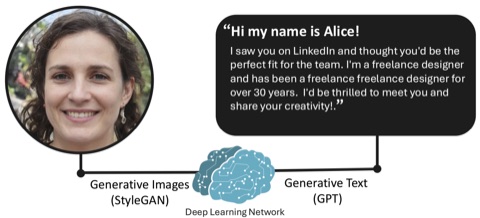

How AI can be integrated into security-sensitive environments.

How sociotechnical factors impact real-world security and privacy of AI.

Usable security and privacy, system security, and evaluation of HCI methodology.

Symbolic Exec GUI • Sociodemographics • Audit Log SoK • Bot Survey Fraud • Survey Fraud SoK • Privacy Zones

Interested in working with the group?

The lab site is the best place for current openings, application details, and ways to get involved.